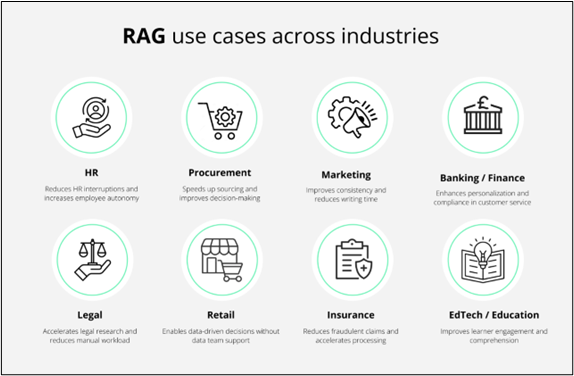

Did you know that the RAG market is estimated to reach 40 billion by the year 2035? This exponentially increases the job scope for gen AI professionals and RAG engineers. Industries are constantly evolving and this increases the demand for gen AI experts to enhance LLMs with knowledge graphs in 2026.

Retrieval-augmented generation (RAG) has transformed the landscape of language models by merging two powerful elements: the retrieval of pertinent information and the generation of coherent, well-founded responses. However, like many revolutionary concepts, the initial phase of Advanced RAG implementation was merely the starting point.

Understanding LLMs

Currently, industries are experiencing a wave of innovation that extends beyond basic retrieval and response frameworks. Understand how LLMs can be enhanced with knowledge graphs in a holistic way. Large language models (LLMs) have demonstrated significant utility across a range of applications, including content generation and question-and-answer systems. The attention mechanism enables transformer-based models to produce coherent sentences and engage in dialogue.

Nonetheless, LLMs face challenges with factual accuracy, and the issue of hallucination frequently arises. Knowledge graphs (KGs) assist in structuring and visually depicting information. The integration of LLMs and KGs presents a promising strategy for addressing the prevalent issues faced by LLMs, thereby enhancing their effectiveness in information retrieval tasks.

A Brief on Knowledge Graphs with Graph-based Retrieval Systems

A knowledge graph (KG) is a system that organizes a knowledge base through graph-based data structures to depict and retain the essential information. The graph's nodes symbolize entities, such as objects, locations, individuals, and more. The edges illustrate the connections between various entities.

KGs are often referred to as semantic networks due to their ability to encapsulate information regarding the relationships among different entities. Below are some characteristics of KGs.

- ● Semantic relationships: These denote the links between two nodes (entities) grounded in the meaning (semantics) of those entities. For instance, a person and a location can be connected through the semantic relationship “lives in.”

- ● Queryable structures: The data within a knowledge graph is generally (though not exclusively) stored in graph databases. It can be accessed efficiently using graph query languages such as SPARQL and Cypher. These languages facilitate the creation and execution of queries like “find a person’s 3rd-degree connections in city X” with ease. In contrast, relational and document databases struggle with processing such queries efficiently.

- ● Scalability for managing extensive information: Knowledge bases encompass vast amounts of data from diverse sources, which tend to expand over time. Consequently, knowledge graphs are engineered to be scalable, accommodating the intricacies of the underlying data.

- ● Perform a Semantic search with knowledge graphs for accurate results.

How Knowledge Graphs and LLMs Work Together

Large Language Models (LLMs) can produce coherent text, yet they lack the ability to verify its factual correctness. Typically, the initial training datasets do not include specific information (like a user manual) that pertains to practical tasks (such as guiding users on how to operate a particular tool).

An LLM that has access to contextual and domain-specific data can leverage that information to generate accurate and meaningful responses. Knowledge Graphs (KGs) enable LLMs to programmatically retrieve relevant and factual data, thereby enhancing their ability to address user inquiries.

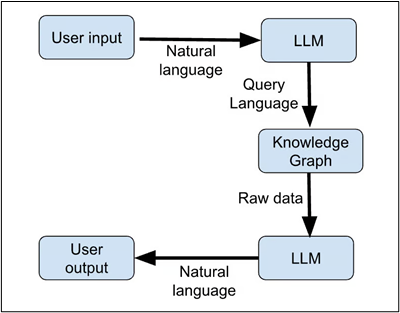

Knowledge graphs excel at semantically organizing, storing, and retrieving information. However, they can only be accessed through specialized query languages such as SPARQL, which is designed for manipulating and retrieving data stored in the RDF format.

The output generated by KGs is also in a coded format, requiring users to have specific training to understand it. LLMs help bridge this gap by translating plain-language user requests into query language and by producing human-readable text from the output of the KG. This functionality allows non-technical users to interact with KGs. The text produced by LLMs is influenced by the training dataset, which often becomes outdated by the time the LLM is deployed. Continuously retraining LLMs with current information is resource-intensive and often impractical.

In contrast, KGs, like any other database, can be updated in real time with relative ease. The computational overhead for updating the connections between nodes based on new information is minimal. Therefore, by integrating with KGs, LLMs can access real-time updated information and deliver timely responses. Here is a representation of a workflow that uses KGs and LLMs to respond to user queries.

Use Cases of Knowledge Graphs in Gen AI

Knowledge Graphs (KGs) offer a well-organized and current repository of information that a chatbot can programmatically utilize to obtain up-to-date data and deliver more pertinent responses to user inquiries.

The KG enhances the chatbot’s inherent knowledge, which is derived from the training dataset. The chatbot learns to formulate sentences based on this dataset while gaining application-specific insights from the KG. Consequently, employing KGs enables the same chatbot framework to be utilized across various applications without the need for retraining.

Large Language Models (LLMs) have been employed as recommendation systems. For instance, an LLM can evaluate the content of an article and suggest related articles. Additionally, LLMs are utilized to develop interactive systems, allowing users to engage in conversation with the recommendation engine.

KGs can systematically arrange and retain data regarding user behavior, interests, interactions, and more. LLMs can process this data to produce customized responses. As a result, users benefit from personalized recommendations tailored to their unique preferences.

In knowledge-intensive fields such as medicine, finance, and law, the combination of LLMs and KGs enhances the accessibility of information. In the medical field, they propose potential diagnoses based on patient symptoms and medical history. In finance, they assist analysts by simplifying the retrieval of relevant information from financial documents. In the legal domain, they support lawyers by helping to find pertinent past cases and rulings, as well as summarizing extensive legal texts.

Tips for Combining Knowledge Graphs and LLMs

The knowledge graph (KG) serves as the information repository that the large language model (LLM) relies on to generate its responses. Consequently, it is essential to keep the graph database updated with the most current information. An outdated KG leads to the LLM providing responses that are no longer relevant.

Both LLMs and KGs are intricate software systems that demand significant computational resources. The process of linking LLMs with KGs involves multiple steps, including tokenizing the user's query, creating the appropriate graph query, retrieving data from the KG, and then generating text based on that data. This sequence can introduce inefficiencies between the initial query and the final response. Below are several strategies to address these challenges.

- ● Caches are utilized to store data that is frequently accessed in high-speed memory, eliminating the need to recalculate results that are commonly used.

- ● Key-value stores can be employed to retain the outcomes of frequent queries to the KG.

- ● LLM embeddings for typical input queries can be cached to prevent repeated access to the LLM API, which conserves both time and expenses.

- ● Optimizing queries involves rewriting database queries and employing appropriate indexes to enhance database access efficiency.

- ● It is crucial to ensure that the selected language model is capable of effectively handling the types of queries it will encounter.

- ● Indexes facilitate quick access to graph components such as nodes, relationships, and their attributes.

- ● Query parameterization involves creating and caching optimized versions of common query structures, using placeholders for user-specific query terms.

- ● Asynchronous querying allows the LLM to proceed without waiting for the KG to provide a response.

- ● Load balancing aids in distributing user queries across multiple replica databases and language models.

Future Trends in RAG Architectures

The future of RAG hinges on its capacity to harmonize technical sophistication with a human-centered design, guaranteeing that its advantages are both transformative and inclusive. As various industries progressively embrace and enhance these systems, the opportunity for RAG to revolutionize decision-making, learning, and innovation is limitless. Below are several domains where RAG is expected to excel.

- ● Multimodal RAG combines text, images, and audio, facilitating personalized educational platforms that cater to various learning preferences.

- ● Unexpected connection: Cross-lingual retrieval closes global communication gaps, promoting real-time multilingual collaboration.

- ● Actionable insight: Focus on adaptive algorithms to meet changing user requirements, ensuring scalability and inclusivity across different sectors.

- ● Insight: Analysts anticipate that multimodal RAG will prevail, merging text, images, and audio for more enriched outputs.

Are you looking to kick start your career in generative AI? Well, look no further than Eduinx. As a leading edtech institute in India, Eduinix houses industry experts as mentors who are there to guide you through your journey of learning about RAG, gen AI, LLMs, and other complex concepts with ease. We also guide students in performing capstone projects and landing their dream job through placement assistance. Get in touch with us for more information on the PG diploma course in generative AI.