Did you know that over 180 million userss are using generative AI? With the entire world harnessing generative AI, you may wonder how it is applied. Be it marketing, healthcare or finance, applied generative AI helps you solve specific problems or perform tasks across different industries through original content and automation workflows. You can enhance your productivity by driving innovation. If you need to understand the core application of generative AI, you need to know about advanced RAG, graph-based retrieval systems, and structured data + LLM integration.

Are you an aspiring data scientist or AI professional looking to expand your scope and understand complex AI concepts? Understanding the core concepts of RAG, retrieval systems, and LLMs will help you learn more complex concepts in Gen AI and implement it in real time. Having a strong understanding of these concepts will help you incorporate gen AI across various touchpoints, making you a very valuable resource across industries. With job openings booming in the field of gen AI, you can stand out from the competition with a significant headstart.

A Brief on LLMs in Generative AI

Large Language Models (LLMs) are advanced AI systems primarily created for the comprehension and generation of text that resembles human writing. These models are constructed using intricate architectures and trained on large datasets to perform a range of linguistic tasks.

- ● Architecture: LLMs generally employ deep learning frameworks like transformers, which consist of numerous layers and self-attention mechanisms to analyze and produce text.

- ● Training Data: They are trained on extensive and varied text data sourced from the internet, including books, articles, and websites, which allows them to comprehend and generate text in different contexts.

- ● Capabilities: LLMs are proficient in tasks such as text generation, summarization, translation, and functioning as conversational agents, thanks to their capacity to maintain context and produce coherent and relevant content.

While Large Language Models (LLMs) and Generative AI (GenAI) are related, they signify different facets of the AI domain. LLMs are a specific subset of GenAI that concentrates on text-related tasks, utilizing deep learning methods to create coherent and contextually appropriate text. Conversely, Generative AI covers a wider array of technologies that produce various content types, including text, images, audio, and video, employing different techniques such as GANs and VAEs.

Grasping these differences is essential for selecting the right technology for particular applications and underscores the dynamic evolution of AI technologies. As both areas progress, they are set to offer increasingly sophisticated and innovative solutions across various fields.

What is RAG and Advanced RAG?

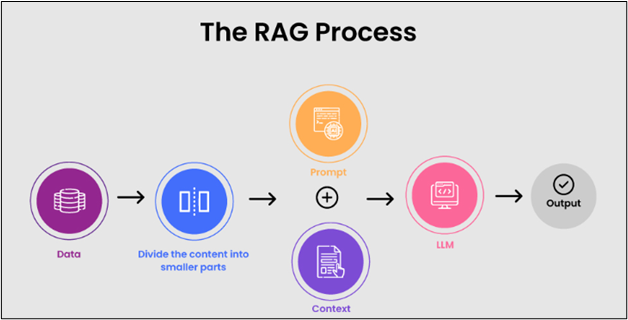

Retrieval-Augmented Generation (RAG) techniques signify a major leap forward in the capabilities of generative AI models. By effectively merging external knowledge sources with pre-trained language models, RAG alleviates many limitations found in traditional systems, resulting in responses that are more precise, contextually appropriate, and information-rich. Nevertheless, conventional RAG systems frequently encounter significant challenges. Problems such as low retrieval accuracy, insufficient contextual awareness in responses, and difficulties in handling complex queries can hinder their effectiveness.

Advanced RAG techniques greatly enhance user experience by producing nuanced responses that reflect a deeper understanding of context and intent. By utilizing sophisticated retrieval mechanisms, these systems can tap into relevant information from vast databases, promoting richer interactions that build user trust and engagement. This blog intends to examine 15 advanced techniques aimed at improving RAG performance by tackling these prevalent challenges directly. Here are some key differences between RAG and advanced RAG.

| RAG | Advanced RAG |

|---|---|

| The system retrieves pertinent information from a knowledge base and provides it directly to the LLM for response generation. | It incorporates extra processing steps both prior to and following retrieval to enhance the information obtained. These processes improve the quality and precision of the generated response, ensuring it aligns well with the model’s output. |

| RAG is easy to implement as it merges retrieval with generation, simplifying the process of enhancing language models without the need for complex modifications or additional elements. | Improved Relevance through Re-ranking: Re-ranking ensures that the most pertinent information is prioritized, enhancing both the accuracy and coherence of the final response. |

| A key benefit of RAG is that it does not necessitate fine-tuning of the LLM. | Dynamic embeddings are tailored for specific tasks, aiding the system in comprehending and responding more accurately to various queries. |

| By utilizing external, current information, Naive RAG greatly enhances the accuracy of the responses generated. | Hybrid search employs various strategies to locate data more efficiently, ensuring greater relevance and accuracy in the outcomes. |

| RAG addresses the frequent problem of LLMs producing incorrect or fabricated information by basing responses on real, factual data retrieved during the process. | Context compression eliminates superfluous details, accelerating the process and resulting in more focused, high-quality answers. |

| The straightforward nature of RAG facilitates its scalability across diverse applications, as it can be modified without major alterations to existing retrieval or generative components. | By reformulating and elaborating on queries before retrieval, advanced RAG guarantees that user queries are thoroughly understood, resulting in more precise and relevant outcomes. |

Techniques of Advanced RAG

Each technique enhances retrieval accuracy, deepens contextual comprehension, or sharpens response generation, ultimately enabling developers to build more responsive and precise AI systems.

- ● Dense Passage Retrieval (DPR) is an intricate technique in natural language processing that utilizes dense vector representations to enhance the retrieval of relevant passages from extensive datasets.

- ● Contrastive learning serves as a robust method in retrieval-augmented generation (RAG) systems, especially for boosting retrieval accuracy and relevance in natural language processing tasks.

- ● Contextual Semantic Search employs context-aware embeddings to thoroughly understand and align with the intent behind words, surpassing basic keyword searches.

- ● Cross-encoder models for ranking exemplify a sophisticated strategy within Retrieval-Augmented Generation (RAG) systems.

- ● Knowledge Graph-Augmented Retrieval (KGAR) is a sophisticated method that improves the functionality of conventional retrieval models by incorporating knowledge graphs into the retrieval workflow.

- ● Hierarchical Document Clustering is a sophisticated approach employed to organize a set of documents into a hierarchy of clusters, facilitating a structured data representation that mirrors the interconnections among documents.

- ● Dynamic Memory Networks (DMNs) represent a sophisticated retrieval-augmented generation (RAG) method that boosts the ability of neural networks to execute tasks that necessitate reasoning over structured knowledge.

- ● The Entity-Aware Retrieval (E-A-R) method enhances traditional retrieval-augmented generation (RAG) techniques by concentrating on the identification and contextualization of entities within a specific dataset.

- ● Prompt Chaining with Retrieval Feedback is a sophisticated Retrieval-Augmented Generation (RAG) approach that progressively enhances the quality of AI responses by utilizing a sequence of prompts with real-time feedback mechanisms.

- ● Hybrid Sparse-Dense Retrieval merges sparse and dense retrieval techniques to enhance search relevance and precision.

- ● Augmented Retrieval-Augmented Generation (RAG) with Re-Ranking Layers is a sophisticated method that improves the accuracy and relevance of outputs produced by RAG models.

- ● Neural Sparse Search, also referred to as Neural Retrieval Fusion, is a sophisticated technique in Retrieval-Augmented Generation (RAG) that integrates the advantages of traditional sparse retrieval with neural-based dense retrieval to provide more accurate and contextually pertinent search results.

- ● Adaptive Document Expansion for Retrieval is a sophisticated Retrieval-Augmented Generation (RAG) technique that enhances document relevance by dynamically broadening content to capture context-sensitive information.

- ● Progressive Retrieval with Adaptive Context Expansion seeks to improve the quality of information retrieval by dynamically extending the context in response to user inquiries.

Understanding Graph-based Retrieval Systems

GraphRAG represents a systematic, tiered method for Retrieval Augmented Generation (RAG), contrasting with simplistic semantic-search techniques that rely on basic text snippets. The GraphRAG methodology includes the extraction of a knowledge graph from unrefined text, the creation of a community hierarchy, the generation of summaries for these communities, and the utilization of these frameworks when executing RAG-based tasks.

Performing Semantic Search with Knowledge Graphs

Utilizing knowledge graphs in Retrieval-Augmented Generation (RAG) for semantic search improves the capability to locate pertinent information by utilizing structured connections among entities. This method facilitates more precise and contextually aware responses, as it links data points through their interrelations instead of depending exclusively on semantic similarity.

With the rise in applications of RAG systems across industries, there is a spike in demand for applied generative AI professionals. At Eduinx, a leading edtech institute in India, we are here to mentor you and guide you in landing your dream job. Our non academic mentors help you understand the nuances of applied generative AI, LLMs, advanced RAG, and Structured data + LLM integration. You can take up our PG diploma in Generative AI course to understand these concepts. Get in touch with us for more information on the course.