If you're building AI models in 2026 and governance feels like a bureaucratic afterthought, you're already behind. The EU AI Act's most critical provisions become enforceable in August 2026. Boards are asking hard questions. And data scientists who can't speak the language of ethical AI frameworks are finding themselves shut out of high-stakes projects.

This post breaks down the responsible AI governance landscape, the core frameworks, the six principles industry leaders have aligned on, and what all of this means if you're a data scientist shipping models into the real world.

Why Responsible AI Governance Is No Longer Optional

Until recently, ethical AI was largely self-regulated; companies published principles documents and called it governance. That era is over.

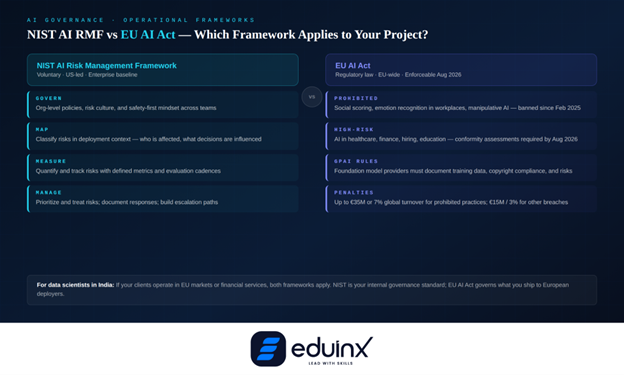

The EU AI Act entered into force in August 2024 and has been rolling out in phases. Prohibited AI practices—emotion recognition in workplaces, social scoring, manipulative systems—became illegal from February 2025. Rules for general-purpose AI models kicked in by August 2025. By August 2026, requirements for high-risk AI systems, covering healthcare, finance, employment, and education, become fully enforceable.

Fines for prohibited practices run up to €35 million or 7% of global annual turnover. For other violations, the ceiling is €15 million or 3% of turnover. These aren't hypothetical; the EU has already demonstrated enforcement appetite across its data privacy framework.

The United States is taking a different path. The NIST AI Risk Management Framework (AI RMF), published in 2023, has become the de facto baseline for enterprise AI governance in North America—voluntary today, but increasingly cited in procurement requirements, litigation, and regulatory expectations. In April 2026, NIST also published a concept note for trustworthy AI in critical infrastructure, signaling where mandatory guidance is heading.

For data scientists in India building for global enterprise clients, both frameworks matter—either directly because your client operates in regulated markets, or because your practices will be benchmarked against them.

🎯 Pro Tip: Inventory Your AI Systems Before Writing a Single Policy

Catalog every AI system your team owns—purpose, data sources, decision points, and affected populations. Regulators ask for this inventory first, and governance programs that start without it tend to collapse under their own weight. You cannot govern what you have not mapped.

The 6 Principles of Responsible AI: What Each One Actually Demands

The most widely cited responsible AI framework comes from Microsoft. Its six principles, fairness, reliability and safety, privacy and security, inclusiveness, transparency, and accountability, are operationalized into mandatory internal review requirements for every AI project at the company. Here's what each one actually demands of a practicing data scientist.

Fairness means your model shouldn't allocate opportunities, risk scores, or outcomes in ways that systematically disadvantage people based on protected characteristics. Proxy variables such as zip codes, browsing behavior, institution names, etc., reintroduce bias through the back door. Fairness audits belong in your validation pipeline, not as a post-launch patch.

Reliability and safety means consistent performance across the conditions the model will actually encounter, including edge cases and adversarial inputs. Stress-testing is non-negotiable, not a nice-to-have.

Privacy and security requires AI systems to respect user data by design. GPAI providers must document training data sources, comply with EU copyright rules, and publish summaries of training data. For LLM applications, data provenance tracking starts at day one.

Inclusiveness asks whether the system works across different backgrounds, languages, abilities, and geographies—not just overall accuracy, but performance parity across user segments.

Transparency means stakeholders can understand what the system does and why it reached a particular output for high-risk decisions. Explainability tools like SHAP and LIME are now standard components in model documentation.

Accountability is where governance becomes organizational. The EU AI Act formalizes this through "provider" and "deployer" roles, each with specific legal obligations attached. Someone has to own the model. Someone has to answer when it goes wrong.

"AI governance is not only about the AI, it's about data protection, it's about cybersecurity, it's about the data science team." - Giovanna, AI Governance Expert (SheAI)

Governance isn't a layer you add to a finished model. It runs through every decision the team makes, from data sourcing to deployment to monitoring.

The Operational Frameworks: NIST AI RMF and ISO 42001

Principles tell you what to aim for. Frameworks tell you how to get there.

The NIST AI RMF organizes governance into four functions: Govern, Map, Measure, and Manage. Govern establishes the org-level policies and safety-first culture. Map identifies and classifies risks in your specific deployment context. Measure quantifies and tracks those risks over time. Manage means prioritizing and responding to what you've found. What makes it useful is that it's not regulatory law; it adapts to any team size or project complexity. The 2024 Generative AI Profile added specific guidance for LLM risks: hallucinations, data memorization, dangerous content generation, and cybersecurity exposure.

ISO 42001 provides a certifiable management system for AI governance, covering everything from organizational structure to risk management and compliance. Think of it as the AI equivalent of ISO 27001. If you're at a consultancy, a fintech, or any organization selling AI into regulated markets, clients will start asking about it. Understanding the framework now puts you ahead of that conversation.

Governance accountability gets especially tangled in multi-agent AI orchestration pipelines. When agents chain actions across systems, pinning ownership of a decision to a single component becomes genuinely hard, and your framework needs to account for that.

🔍 Pro Tip: Align Your Model Cards to the NIST MAP Function

Every model you ship should answer: What problem does this solve? Who does it affect? What data was used? What are the known failure modes? This maps directly to the NIST MAP function and will save you hours when a compliance review lands.

Algorithmic Bias Mitigation: Where Most Teams Still Fall Short

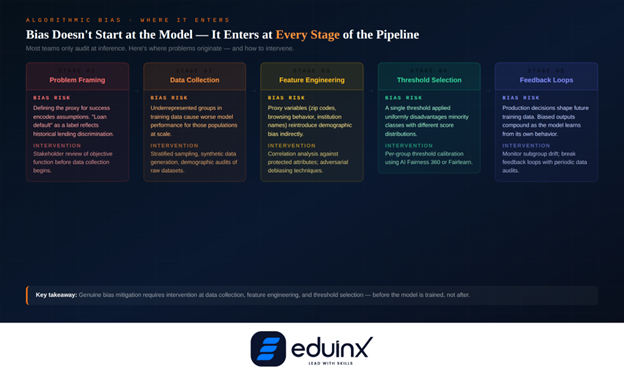

The typical bias audit checks demographic parity or equal opportunity metrics at the model output level. That's a start, but bias enters the pipeline at multiple points: problem framing, data collection, feature engineering, inference thresholds, and feedback loops that determine which data gets collected next.

A credit model trained on loan repayment data will systematically underserve populations historically denied credit—not because the model is malicious, but because the training data reflects past discrimination. Fixing it requires intervening in data collection, reweighting, synthetic data generation, or constraint-based optimization during training, not just checking fairness metrics after the fact.

IBM's AI Fairness 360, Google's What-If Tool, and Microsoft's Fairlearn are all production-ready. The more fundamental shift, though, is organizational: bias detection needs to be a required gate in the development lifecycle, not an optional task run by one engineer when they find time.

The risk is especially acute for real-time personalization systems, when algorithms determine which job ads, loan offers, or educational content a user sees, the compounding effect of small biases across millions of decisions becomes a serious equity problem.

Putting It Into Practice: A Three-Part Starting Point

Most teams know they should do governance but struggle to start. The tendency is to treat it as a one-time compliance exercise: fill out a form, file it, move on. That approach fails almost immediately when the model hits production.

Start with three things. First, a risk classification at project kickoff; before the first line of training code, determine what decisions this model influences, who could be harmed if it fails, and what data is involved. This calibrates how much governance is proportionate.

Second, documentation that travels with the model: model cards at minimum, plus data lineage documentation, fairness evaluation reports, and a defined escalation path for unexpected behavior in higher-risk applications.

Third, production monitoring with defined response thresholds: models drift, distributions shift, and bias patterns emerge over time. Without monitoring, you won't know until something goes wrong publicly.

Organizations with mature governance practices allocate roughly 5–10% of their AI development budget to ethics and oversight. That's not overhead; it's risk management with a measurable return.

🧠 Pro Tip: Build Ethics Reviews Into Your Sprint Cadence

No ethics committee needed to start. Add 20 minutes to your sprint retrospective: What assumptions did we embed? Did we check for bias in the new feature? Who could be affected? Small, regular checkpoints compound into a strong governance culture over time.

Conclusion

Responsible AI governance is the infrastructure layer beneath every AI system that matters. Microsoft's six principles, NIST AI RMF, ISO 42001, the EU AI Act—these aren't separate initiatives. They're different lenses on the same problem: how do you build AI that people can trust, that performs reliably across diverse populations, and that holds up to regulatory scrutiny?

For data scientists in 2026, this is core competency, not compliance overhead. Inventory your systems, classify your risks, document as you build, and treat fairness auditing as a technical requirement. The teams that do this well will work on the most consequential AI projects being built.