For the past few years, "AI in product management" meant one thing: a smarter search bar. You'd ask your tool to summarize a ticket, generate a status update, or suggest a due date. Useful, sure — but the core work still ran on human decisions. A task moved forward because a PM moved it.

That model is shifting. A new class of AI is being built directly into the tools your team lives in — and this one doesn't wait for prompts. It monitors your products, surfaces risks, reroutes tasks, and in some cases, takes action before you even log in for the day.

This is what agentic execution looks like in practice. And if you're a working professional in tech, a team lead, or anyone trying to understand where AI careers are actually heading, this shift matters more than most headlines suggest.

AI Assistants vs. AI Agents: The Line That Actually Matters

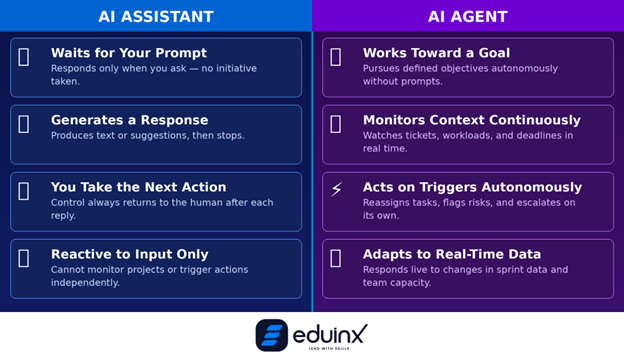

Most teams today use what the industry calls copilot-style AI. These tools are reactive — they respond to a prompt, generate a suggestion, and hand control back to you. They speed up individual tasks. They don't change how the system operates.

AI agents are different in one specific way: they have goals, not just instructions.

An agent in a PM tool doesn't wait for you to ask it to check on a sprint. It continuously monitors ticket status, dependency chains, team velocity, and workload data. When something is off, it either alerts you or takes a defined action — reassigning a task, triggering an escalation, or adjusting a milestone — depending on the permissions you've set.

The distinction matters because it changes the operating model. Copilot AI makes you faster within a phase of work. Agentic AI changes what happens at the handoffs between phases — which is exactly where most product delays and context loss actually occur.

According to McKinsey's QuantumBlack team, writing about agentic workflows in software development: the biggest problem with AI-assisted development isn't the code generation — it's that "the handoff from requirements to design to implementation is where context goes to die." Agentic systems are being built specifically to address this.

🎯 Pro Tip: Start with One High-Volume Handoff

Before deploying an AI agent broadly, identify one workflow where context consistently gets lost between teams — like the gap between a standup decision and a Jira update. That's where agents show their value fastest. Narrow scope, fast signal.

What Agentic Execution Actually Looks Like in PM Tools Today

Let's get specific. Here's how agentic capabilities are showing up in the tools teams actually use right now.

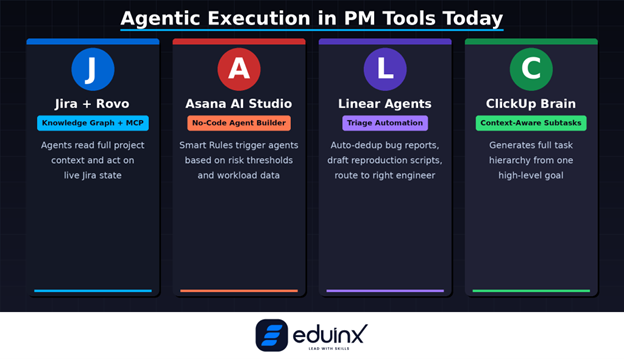

Jira + Rovo: The Knowledge Graph Approach

Jira has moved well past ticketing. With Atlassian's Rovo, Jira now functions as a knowledge graph that agents can query and act on. Rovo agents can create work items, update fields, surface related decisions from Confluence, and flag risks — all within the context of ongoing products.

In April 2026, Atlassian launched MCP Server GA, which connects external AI clients like Claude and Cursor to Jira's full product state via the Model Context Protocol. This means teams can now build custom agent workflows using their own AI models, with full read access to live Jira data.

For enterprise teams, this is significant: your AI agent can now understand not just what's in the ticket, but the entire product’s context around it.

Asana AI Studio: No-Code Agent Builder

Asana's AI Studio has emerged as one of the more mature no-code agent builders available today. Teams at Asana itself use it to triage security alerts, route bug reports, and automate security review workflows — none of which require a developer to configure.

The standout capability is Smart Rules. You can define logic like: "If the risk score on Product A exceeds 'High,' schedule a meeting between the Product Lead and Risk Officer and draft the agenda from the flagged risks." The agent handles execution. The manager handles judgment.

If you want to go deeper on how agentic AI is transforming product delivery cycles, the Eduinx blog on Agentic AI in Product Management covers the upstream impact on go-to-market timelines in detail.

Linear's Triage Agents: Signal Over Noise

Linear has always been the fast-and-opinionated alternative to Jira, favored by engineering-focused startups. In 2026, its Triage Agents are doing something genuinely useful: autonomously reading incoming bug reports, deduplicating them against existing tickets, and drafting reproduction scripts for engineers.

The result is a measurable reduction in the signal-to-noise ratio on engineering backlogs. Less time triaging. More time building.

ClickUp Brain: Context-Aware Sub-Task Generation

ClickUp Brain takes a different angle. When you create a high-level task — say, "Launch Mobile App v2.0" — ClickUp's AI agent draws on your team's previous launch history to autonomously generate the sub-tasks required: QA, UAT, App Store submission, and so on.

It's less about autonomous action and more about closing the gap between intent and execution plan. For PMs who spend hours building out task hierarchies, that's a meaningful time reclaim.

🧠 Pro Tip: Data Quality First, Agents Second

Portfolio-level AI is only as good as the consistency of your product data. Before deploying agentic features for multi-product visibility, audit your task structure and field completion rates. Agents trained on incomplete data produce unreliable predictions — and those predictions get trusted more than they should be.

The Real Architecture: Specialized Agents, Not One Smart Bot

One of the more important insights coming out of teams that have actually deployed agentic systems in production is this: a single, general-purpose agent fails on complex products. What works is a multi-agent setup where each agent has a bounded, specific responsibility.

Researchers at Cisco's engineering team, writing about their agentic engineering implementation, describe the architecture this way: specialized worker agents handle individual tasks, while a leader agent coordinates across them, maintains shared memory, manages tool access, and provides global observability into decisions.

Their results: across more than 15 development workflows, they observed a 65% reduction in execution time compared to historical baselines — and the gains weren't primarily from faster code generation. The bigger impact came from compressing downstream workflows after code was merged, through coordinated agent execution.

This mirrors the pattern QuantumBlack described: agents are good at generating content within a bounded problem. They struggle with meta-level decisions about sequencing. The fix is a rule-based workflow engine that enforces phase transitions — requirements must be complete before tasks are generated, architecture reviewed before implementation starts — while agents handle execution within each phase.

This has a direct implication for how PMs should think about AI deployment: don't look for one AI that manages everything. Look for a coordination layer that connects specialized agents with clear handoffs and human checkpoints built in.

For teams exploring how AI orchestration manages this kind of cross-functional complexity, the Eduinx deep dive on AI Orchestration is worth reading alongside this.

Decision-Making Automation: Where Human Judgment Still Belongs

The question most PMs ask when they first encounter agentic PM tools is a practical one: what exactly is the agent allowed to do on its own?

The answer varies by tool, but the design pattern is consistent across the mature platforms: agents get full autonomy on routine, reversible actions. Anything that changes a product's scope, commitments, or resource allocation requires a human review step.

Atlassian's documentation frames it clearly: teams should calibrate agent permissions based on the consequence and reversibility of the action. Auto-assign a bug to the engineer who wrote the relevant code six months ago? Autonomous is fine. Reschedule a client milestone? That needs a review.

The practical implementation is audit logs and approval queues. Every agent action is visible, reversible, and auditable. This is the design pattern that makes agentic AI deployable in real organizations, not just demos.

The emerging risk is different from what most people expect. The concern isn't that agents will act outside their permissions — most are well-constrained. The concern is that their predictions and recommendations get trusted at face value, without the team doing the second-order thinking. An agent that surfaces a risk is still relying on you to decide whether the risk actually matters in context.

🚀 Pro Tip: Define Agent Success Metrics Before Deployment

Before giving an agent autonomy over sprint assignments or backlog triage, define what "working" means: number of manual updates eliminated, cycle time improvement, or team hours reclaimed. Without a baseline, you can't tell if the agent is actually helping or just creating a new category of overhead to review.

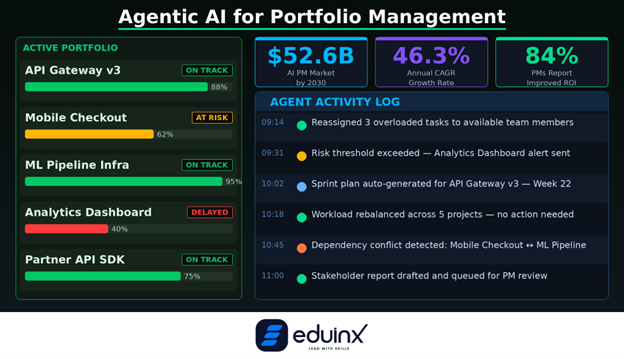

Agentic AI for Portfolio Management: The Higher-Stakes Opportunity

For senior PMs, program managers, and PMO leads, the most consequential application of agentic AI isn't at the sprint level. It's at the portfolio level — where the complexity of tracking multiple workstreams, resource constraints, and interdependencies makes manual oversight genuinely infeasible.

Monday.com's workload view allows teams to model "what if" scenarios: pull a product forward, and immediately see the downstream impact on individual capacity before committing. This is not a report. It's a live simulation tied to real team data.

The global market for AI in product management is produced to reach $52.62 billion by 2030, growing at a CAGR of 46.3%. That number reflects where enterprise investment is heading. For professionals building careers in data, AI, and product, understanding how these systems work at the architectural level — not just as a user, but as someone who can design, deploy, and govern them — is becoming a core competency.

If you want to understand how these agentic architectures connect to the broader evolution of AI reasoning systems, the Eduinx explainer on RAG Evolution fills in the technical foundation that most PM-focused articles skip.

What This Means for Your Career

"AI will eliminate 80% of product managers' work by 2030," according to a Gartner production. The realistic reading of that isn't that PMs disappear — it's that the work composition changes dramatically. Routine coordination, status tracking, backlog grooming, and sprint reporting become agent responsibilities. What remains for humans is the work that requires judgment: stakeholder management, risk prioritization, trade-off decisions, and organizational navigation.

That's not a smaller job. In many ways it's a harder one, because you're now managing a system that includes AI agents alongside people. You need to understand what the agents are doing, where they're likely to be wrong, and how to configure them effectively.

The professionals who will thrive in this environment aren't those who know the most about any single tool. They're the ones who understand the underlying principles — goal-based agents, multi-agent coordination, human-in-the-loop design, and agentic workflow orchestration — well enough to apply them across whatever platform their organization uses.

Wrapping Up

The shift from AI assistants to AI agents in PM tools is not a feature update. It's a change in who — or what — is responsible for keeping a product moving between human decisions.

The tools are here, and they're maturing fast. Jira with Rovo, Asana AI Studio, Linear's Triage Agents, and ClickUp Brain each represent a different take on the same core question: how much autonomous action should the system be allowed to take, and under what conditions?

Getting that configuration right — and understanding the design principles behind it — is the new PM skill set. Not because it's impressive, but because the teams that get it right will ship faster, with less overhead, and catch risks earlier than those still running products on manual updates.

The assistant era is over. The agent era is already here.