AI used to live in the cloud. You would send data up, wait for results to come back, and act on them seconds or sometimes minutes later. For most enterprise applications, that lag was acceptable. But now, with billions of sensors streaming data from factory floors, hospital wards, vehicles, and smart cities, waiting for the cloud is not an option anymore.

Edge AI changes that equation entirely. It runs intelligence directly on the device or gateway where data is generated, cutting out the round trip to a centralized server. In 2026, this is not a niche architecture choice, it is fast becoming the default for any data science application where timing, privacy, or connectivity cannot be taken for granted.

If you are a data professional trying to stay ahead of where the field is going, understanding Edge AI trends is no longer optional.

What Is Edge AI and Why Does It Matter Now?

Edge AI refers to the deployment of artificial intelligence models directly on local devices, sensors, cameras, industrial gateways, wearables, or smartphones, instead of in a distant cloud data center. Inference happens right where the data is generated, without a round trip to a central server.

First, specialized hardware has matured dramatically. Neural Processing Units (NPUs) and dedicated AI accelerators are now standard across devices ranging from smartphones to industrial equipment. These chips can deliver up to 10 trillion operations per second while consuming just 2.5 watts of power, roughly six times more efficient than traditional CPUs for neural network inference. That energy efficiency changes the economics of deploying AI at scale across thousands of remote devices.

Second, model compression techniques have reached production maturity. Large AI models are being quantized, pruned, and distilled down to run on constrained hardware without meaningful accuracy loss. Dell's Jeff Clarke put it plainly in his January 2026 predictions: "Micro LLMs, compact, task-specific models optimized for efficiency are moving intelligence to the edge. These models require less compute, less power, and will live on devices."

Third, regulatory pressure is mounting.The EU AI Act, now fully enforceable in 2026, requires high-risk AI systems to be auditable and explainable. For many organizations, keeping data on-premises is the simplest path to compliance. Edge AI makes that possible without sacrificing analytical capability.

🎯 Pro Tip: Get Comfortable with Model Optimization Early

The core skill that separates cloud AI engineers from edge AI engineers is model optimization - quantization, pruning, and knowledge distillation. Frameworks like TensorFlow Lite, ONNX Runtime, and PyTorch Mobile are your starting points. Even a basic understanding of these pipelines will make your profile stand out significantly in the job market.

The Hardware Revolution Powering Edge Intelligence

You cannot talk about Edge AI trends without talking about the silicon that makes it possible. The hardware layer is what has changed most dramatically in the last 18 months.

NPUs are now embedded in everything from industrial cameras to consumer smartphones. Unlike general-purpose CPUs and GPUs, NPUs are architected specifically for the matrix operations that neural networks rely on. The result is that AI inference that would have required a rack-mounted server a few years ago can now run on a chip smaller than a fingernail.

Beyond NPUs, neuromorphic computing is beginning to move from research labs toward production. These chips mimic the event-driven, spike-based communication of biological neurons. For applications like anomaly detection on continuous sensor streams, where most of the time nothing interesting is happening, neuromorphic architectures can reduce power consumption to levels that are simply not achievable with conventional processors. Oil rig vibration sensors that need to run for months on battery power are a prime example.

Key Edge AI Applications Reshaping Data Science

Understanding where Edge AI is actually delivering results matters more than any abstract definition. Across industries, the patterns are clear.

Manufacturing and Predictive Maintenance

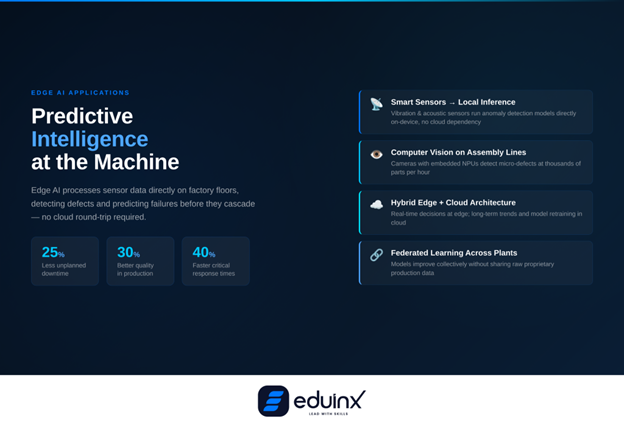

Manufacturing is where Edge AI has found its most immediate and measurable footing. Quality inspection cameras on assembly lines now run computer vision models locally, processing thousands of parts per hour without sending image data to remote servers. An automotive plant defect detection system can identify microscopic flaws that human inspectors would miss, at full production speed.

Predictive maintenance is equally significant. Vibration sensors on industrial equipment analyze acoustic patterns continuously to detect anomalies before they cascade into failures. Real deployments report up to 25% reductions in unplanned downtime. That is not a marginal efficiency gain, for a high-throughput manufacturing facility, it translates to millions in avoided losses per year.

Hybrid architectures are emerging as the practical choice here. The edge handles real-time decisions: defect detection, robot coordination, safety monitoring. The cloud handles long-term trend analysis and model retraining across multiple facilities. Companies adopting this split architecture report 40% faster response times for critical operations while cutting cloud costs by 30 to 50%.

Smart Cities and Real-Time Urban Analytics

Smart city infrastructure is one of the largest embedded AI deployments underway. Traffic management systems use edge-deployed computer vision to adjust signal timing based on real-time congestion, rather than fixed schedules built on historical averages. Environmental sensors analyze air quality and noise data on-device, triggering alerts without waiting for central server responses.

The scale involved here is what makes edge processing essential. A city with thousands of camera nodes and environmental sensors simply cannot route all raw data to a central location; the bandwidth cost alone would be prohibitive. Edge AI filters, transforms, and acts on data locally, transmitting only meaningful summaries or alerts upstream.

Federated learning is an important technical enabler in this context. It allows AI models to improve collectively across thousands of distributed edge devices without any raw data ever being centralized. Each node trains locally on its own data and shares only model weight updates, not the underlying readings. This solves both the privacy problem and the bandwidth problem simultaneously. The same distributed intelligence principle is also reshaping hyper-personalization engines deliver real-time recommendations at the application layer, inference pushed closer to the point of need, whether at the edge of a network or the edge of a user session.

🔍 Pro Tip: Learn Federated Learning Before It Becomes a Job Requirement

Federated learning is already moving from research papers into production deployments in healthcare, manufacturing, and smart city applications. Getting familiar with frameworks like PySyft or NVIDIA FLARE now puts you ahead of the curve. The engineers who understand both distributed systems and ML will be the ones leading these projects.

Edge AI for Data Scientists: What This Means for Your Career

The rise of Edge AI does not replace data science, it extends it into new and more constrained environments. But it does change the skills profile that employers are looking for.

Cloud-centric data science has largely been about working with large datasets, training powerful models on ample compute, and deploying to scalable infrastructure. Edge AI adds a different set of constraints: models must be small enough to fit on device, efficient enough to run on limited power budgets, and robust enough to work without constant connectivity. This is a meaningful shift from the retrieval-augmented patterns that have dominated enterprise AI, where systems like agentic RAG frameworks pull from centralized knowledge stores,, toward architectures where the intelligence itself must be embedded and self-contained.

The practical skills that matter most in this transition are model quantization and compression, familiarity with edge-friendly frameworks (TFLite, ONNX, Core ML), and an understanding of hardware-software co-design. Knowing how a model will perform on a specific NPU is a very different skill from knowing how it performs on a GPU cluster.

There is also an important overlap with MLOps. Managing and updating AI models across potentially thousands of distributed edge devices is a genuine operational challenge, one that does not have clean solutions yet.

If you are already building skills in AI orchestration and multi-agent workflows, which is where a lot of PM and engineering attention is focused, the edge inference layer is a natural adjacent skill set to develop. The intersection of agentic reasoning and edge-based sensor intelligence is where some of the most interesting next-generation data science applications are being built.

💡 Pro Tip: Start with a Concrete Edge Use Case, Not Theory

The fastest way to develop real edge AI intuition is to pick one applied use case and build end-to-end. Predictive maintenance using a public vibration sensor dataset, or a simple on-device image classifier using TFLite on a Raspberry Pi, will teach you more than reading a dozen architecture papers. Employers value demonstrated projects in this space significantly over theoretical knowledge.

Challenges You Should Know About

Edge AI is not without genuine complexity. The EU AI Act's auditability requirements create real operational challenges for edge deployments: maintaining documentation about model decisions across thousands of distributed devices is technically non-trivial.

Device management at scale is another open problem. Updating AI models across 20,000 edge nodes deployed in remote or inaccessible locations requires robust orchestration infrastructure that many organizations are still figuring out.

Conclusion

Edge AI is not coming, it is already reshaping how data-intensive industries operate, and the pace is accelerating. The combination of mature NPU hardware, efficient small language models, federated learning, and 5G connectivity has removed most of the technical barriers that held edge deployment back even two years ago.

For data science professionals, this creates a clear signal: the value of your skills is increasingly tied to how well you can operate in constrained, distributed environments, not just cloud-scale ones. The practitioners who learn to compress, deploy, and govern AI at the edge will find themselves working on problems with direct, measurable impact in manufacturing, healthcare, autonomous systems, and urban infrastructure.